How AI Search Platforms Choose Their Sources: A Deep Research Analysis of Citation Patterns

Deep research into AI search platforms has uncovered systematic patterns in how ChatGPT, Google AI Overviews, and Perplexity select and cite their sources. These AI systems don't choose references randomly; indeed, they follow distinct three-stage processes that favor specific domains, content types, and authority signals. Understanding these AI Citation mechanisms is essential for anyone pursuing AI Visibility through Generative Engine Optimization (GEO). This analysis examines citation concentration metrics, source quality patterns, and the specific content characteristics that increase the likelihood of being cited by AI search platforms.

The Three-Stage Source Selection Process in AI Search

AI search platforms operate through a structured three-stage selection framework that determines which sources appear in generated responses. This process combines static training knowledge with real-time web retrieval, filters content through credibility algorithms, and synthesizes citations based on authority signals.

Stage 1: Information Retrieval from Training Data and Live Web

AI models begin source selection through two distinct retrieval pathways. The first relies on training data: patterns learned from text corpora, books, websites, and licensed datasets ingested during the model's development phase. This model-native synthesis generates answers from probabilistic knowledge stored within the AI system itself, offering speed and coherence but creating risk of hallucination since the model produces text from memory rather than verifiable sources [1].

The second pathway involves Retrieval-Augmented Generation (RAG), where AI systems perform live web searches to ground responses in current information. When a query requires recent data or specialized knowledge beyond training data, the AI model searches external sources, retrieves relevant documents or snippets, then synthesizes responses based on those live results [2]. Perplexity positions itself specifically as a retrieval-first platform, defaulting to query, live search, synthesize, and cite behavior. ChatGPT accesses live data through explicit browsing features and plugins that enable web search capabilities, while Google AI Overviews integrate directly with Google's live search index and Knowledge Graph [1].

Most non-Google platforms rely on Bing's Web Search API for live retrieval, making Bing indexation critical for AI Citation visibility. Since speed matters for conversational AI search, systems reference only top results from their databases when generating responses [3].

Stage 2: Authority Weighting and Credibility Assessment

Following retrieval, AI systems evaluate source trustworthiness through multi-layered credibility algorithms. The platforms prioritize content that represents ideas clearly, aligns with established understanding across multiple sources, can be summarized without changing meaning, and fits into broader knowledge conversations [2].

Cross-source consistency functions as a primary trust mechanism. Brands mentioned positively across at least four different non-affiliated platforms were 2.8x more likely to appear in ChatGPT responses compared to brands only mentioned on their own websites [4]. This multi-source validation process identifies conflicting information and favors sources with clearer, evidence-supported positions [5].

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) signals serve as computational verification tools. AI systems cross-reference author backgrounds, validate credentials, and assess content depth to determine expertise levels. Essentially, the platforms evaluate whether content demonstrates clear authority through verifiable credentials, proprietary data, research methodology transparency, and entity recognition across trusted digital properties [5].

Stage 3: Citation Synthesis and Brand Positioning

The final stage transforms retrieved and validated sources into cited references within generated responses. Not all retrieved pages become citations; only those the AI model determines most relevant and authoritative receive attribution. The system combines trained understanding with real-time content, creating responses that cite selected sources contributing specific information to the answer [2].

Brand search volume predicts AI visibility more reliably than traditional SEO metrics, with correlation strength of 0.334 making brand popularity the strongest citation predictor. Citation positioning within responses creates compounding advantages, as brands mentioned in the first two sentences receive 5x more consideration than those appearing later in AI-generated text. Specific prompt language influences this synthesis stage; words like "trusted" appear in prompts generating citations 5.77% more often [4].

Platform-Specific Citation Behaviors Across Major AI Systems

Analysis of 30 million citations across ChatGPT, Google AI Overviews, and Perplexity reveals that each platform operates within fundamentally different source ecosystems [6]. Only 11% of domains receive citations from both ChatGPT and Perplexity, indicating that optimization strategies effective for one platform rarely translate directly to another [7]).

ChatGPT's Wikipedia-Heavy Citation Pattern (47.9% of Top Sources)

ChatGPT exhibits extreme concentration toward encyclopedic reference materials. Within its top 10 most-cited sources, Wikipedia accounts for 47.9% of citations, making it by far the dominant reference point [8][6]. When measured across all citations rather than just top sources, Wikipedia still represents 7.8% of total ChatGPT citations [9]. This preference reflects the platform's training emphasis on authoritative, comprehensive reference materials that provide broad topical coverage rather than narrow expertise.

The remaining citation distribution among ChatGPT's top sources shows Reddit at 11.3%, Forbes at 6.8%, G2 at 6.7%, and TechRadar at 5.5% [8]. Accordingly, ChatGPT processes over 3 billion prompts monthly [10], generating roughly 0.9 billion visits to the top 1,000 websites as of June 2025 [3]. The platform retrieves approximately six times more pages than it actually cites, with 85% of retrieved pages never receiving attribution [11].

Google AI Overviews' Balanced Multi-Platform Approach

Google AI Overviews demonstrates markedly different source distribution patterns compared to ChatGPT. Reddit emerges as the leading citation source at 21%, followed closely by YouTube at 18.8% [6]. This balanced approach reflects Google's integration with its broader ecosystem and emphasis on diverse content types including video, community discussions, and professional networks.

Wikipedia receives substantially lower priority in Google AI Overviews, appearing in just 5.7% to 7% of citations [12][13]. The platform also incorporates professional content sources like LinkedIn and Gartner alongside social platforms [6]. YouTube's prominence at 29.5% citation share demonstrates video content's elevated status within Google's AI search framework [14]. Google AI Overviews now appear in 16-25% of all searches depending on query type, reaching 1.5 billion users monthly [7]).

Perplexity's Community-Driven Source Preference (Reddit 6.6%)

Perplexity shows the most extreme community platform concentration among major AI search systems. Reddit represents 46.7% of Perplexity's top citations, nearly twice Wikipedia's share [6][13]. When measured across all citations rather than top sources specifically, Reddit accounts for 6.6% of total Perplexity citations [15][16]. The platform handles 780 million searches per month and accounts for 15-20% of AI-driven website traffic in the US [15].

Furthermore, Perplexity averages 21.87 citations per question compared to ChatGPT's 7.92 citations per response [17]. This citation-heavy approach reflects Perplexity's positioning as a retrieval-first search engine rather than a conversational AI. The platform uses search-first RAG that cross-references multiple sources before citing, operating against a proprietary index of over 200 billion URLs [3].

Cross-Platform Citation Volume Comparison

ChatGPT commands 87.4% of all AI chatbot referral traffic globally. In contrast, Perplexity captures approximately 15% of global AI referral traffic despite representing a smaller fraction of overall search volume [3]. Query patterns differ substantially as well: Perplexity shows 70.5% single-query behavior while ChatGPT demonstrates only 32.7% single-query usage [17]. These divergent citation mechanics create distinct optimization requirements for each platform.

Citation Concentration and Source Diversity Metrics

Quantifying citation inequality across AI platforms requires economic distribution metrics typically applied to wealth concentration. The Gini coefficient measures inequality on a scale from 0 (perfect equality) to 1 (perfect inequality), revealing how AI search systems concentrate citations among a small set of dominant sources [18].

Gini Coefficient Analysis: OpenAI (0.83) vs Google (0.69) vs Perplexity (0.77)

OpenAI models exhibit the highest citation inequality with a Gini coefficient of 0.83, followed by Perplexity at 0.77 and Google at 0.69 [19]. These values indicate severe concentration, particularly for OpenAI, where citation distribution resembles winner-take-all economics rather than equitable source diversity [5]. In view of these coefficients, OpenAI's pattern suggests heavy reliance on its most frequently cited sources, whereas Google models display more dispersed citation behavior [19].

The difference in inequality levels connects directly to the breadth of unique sources each platform references. Perplexity cites substantially more unique news sources at 1,430 compared to Google's 881 and OpenAI's 707. This long tail of rarely-cited domains contributes to Perplexity's higher Gini coefficient despite lower concentration among top sources, creating an apparent paradox where broader source inclusion statistically registers as higher inequality [19].

Top 20 Sources Account for 67.3% of OpenAI Citations

The top 20 most frequently cited news sources capture 67.3% of all OpenAI citations, demonstrating extreme concentration among a small domain set [19]. In contrast, Google and Perplexity show less severe concentration, with their top 20 sources representing 31.9% and 28.5% of citations respectively [19] [5]. For instance, OpenAI most frequently cites reuters.com, Google favors indiatimes.com, and Perplexity prefers bbc.com as their leading sources [19].

Vertical-specific analysis reveals approximately 30 domains control 67% of AI citations per topic, with the top 10 domains capturing 46% of citations in any given sector. Education demonstrates the most concentrated pattern where the top 10% of domains capture 59.5% of all citations, making new entry exceptionally difficult [20].

Impact of Long-Tail Domain Distribution on Citation Equity

The long-tail effect shapes citation equity significantly: 64.3% of cited domains appeared only once across the entire dataset [21]. Commercial .com domains dominate with over 80% of citations, while non-profit .org sites capture 11.29%. Country-specific domains collectively represent approximately 3.5% of citations, with tech-focused TLDs like .io and .ai showing notable presence despite being newer domains [9]. This distribution pattern creates practical barriers for new entrants seeking AI Visibility, as citation mechanisms favor established domains with existing cross-platform recognition.

Political Bias and Quality Patterns in AI-Cited Sources

Political orientation patterns in AI-cited sources reveal systematic left-leaning tendencies across all major platforms, though quality standards remain consistently high regardless of political positioning.

Left-Leaning Bias: 98% Combined Left-Center Citations

News citations concentrate overwhelmingly among left-leaning and center sources, with combined left-center citations representing approximately 98% across all AI search platforms. OpenAI models demonstrate the highest proportion of center sources at 85.2%, compared to Google at 75.2% and Perplexity at 72.8%. For left-leaning sources specifically, Google models show the highest citation rate at 26.3%, followed by Perplexity at 23.6% and OpenAI at 14.5% [19].

This pronounced bias manifests consistently despite different retrieval mechanisms and training approaches across platforms. All three model families exhibit significant left-leaning patterns in their news source citations, even when applying statistical controls for various confounding factors [19].

High-Quality Source Preference: OpenAI 96.2%, Google 92.2%, Perplexity 89.7%

Quality metrics show strong preference for high-credibility sources across all platforms, with OpenAI models demonstrating the highest proportion at 96.2%, followed by Google models at 92.2% and Perplexity models at 89.7%. These quality thresholds apply a 0.5 score cutoff to classify sources as high-quality [19].

The top 20 most frequently cited news sources by each model family are predominantly center and left-leaning sources and overwhelmingly high-quality, confirming the pattern observed in broader distribution analysis. Notably, AI search systems adapt their news citation behavior to question types, mirroring patterns from traditional search engines [19].

Right-Leaning Sources Represent Under 1.2% Across Platforms

Right-leaning sources capture minimal citation share across all AI search platforms. Perplexity shows the highest proportion at 1.2%, while Google cites right-leaning sources at 0.8% and OpenAI at just 0.3% [19]. This extreme underrepresentation creates citation asymmetry that favors progressive and centrist publications by substantial margins.

User Preference Shows No Correlation with Political Leaning or Quality

Regression analysis reveals that neither the proportion of news citations nor the characteristics of cited news sources correlate with user preference at statistically significant levels [19]. This finding indicates that users do not systematically prefer responses based on political leaning or source quality scores, creating a disconnect between AI Citation patterns and actual user satisfaction metrics.

What Makes AI Platforms Trust and Cite a Source

Trust mechanisms in AI search operate through quantifiable content signals that platforms evaluate before citation. These characteristics span statistical depth, institutional credibility, external validation, domain type, and technical structure.

Statistical Specificity: 30-40% Higher Visibility with Data and Citations

Content containing specific numerical data receives 30-40% higher visibility in AI responses compared to qualitative content [22]. The Princeton GEO study found statistics addition increases visibility by 22%, while quotation addition from named experts improves visibility by 37% [4]. Citing authoritative sources produces the strongest impact, with up to 115% visibility increase for sites ranked in position #5 [23]. AI algorithms specifically evaluate numerical data with clear sources and dates, statistical trends, quantified outcomes, industry benchmarks, and survey data from reputable organizations. Content meeting the 30% rule, where at least 30% consists of verifiable facts and statistics, qualifies as authoritative by AI systems [22].

Institutional Authority and E-E-A-T Signal Recognition

AI platforms evaluate Experience, Expertise, Authoritativeness, and Trustworthiness as citation triggers. Content from authors with visible credentials receives 40% more citations than identical content without attribution [24]. Moreover, 96% of AI citations go to sources with strong E-E-A-T signals [25]. The mechanisms prioritize transparent contact information, HTTPS encryption, accurate content with citations, and clear author bylines with credentials [26].

Cross-Source Consistency as a Citation Reinforcement Mechanism

AI systems cross-verify information across multiple sources before citing [1]. Brands present on 4+ platforms demonstrate 2.8× higher citation likelihood compared to single-platform presence [24]. Web mentions correlate 3× more strongly with AI visibility than backlinks, with correlation coefficients of 0.664 versus 0.218 [2]. Inconsistency across sources reduces AI confidence, while consistent messaging increases citation probability [1].

Domain Authority: .com Domains Capture 80%+ of Citations

Commercial .com domains represent over 80% of all AI citations [1]. Non-profit .org sites capture 11.29% as the second most-cited category [9]. Country-specific domains collectively account for approximately 3.5% of citations [9].

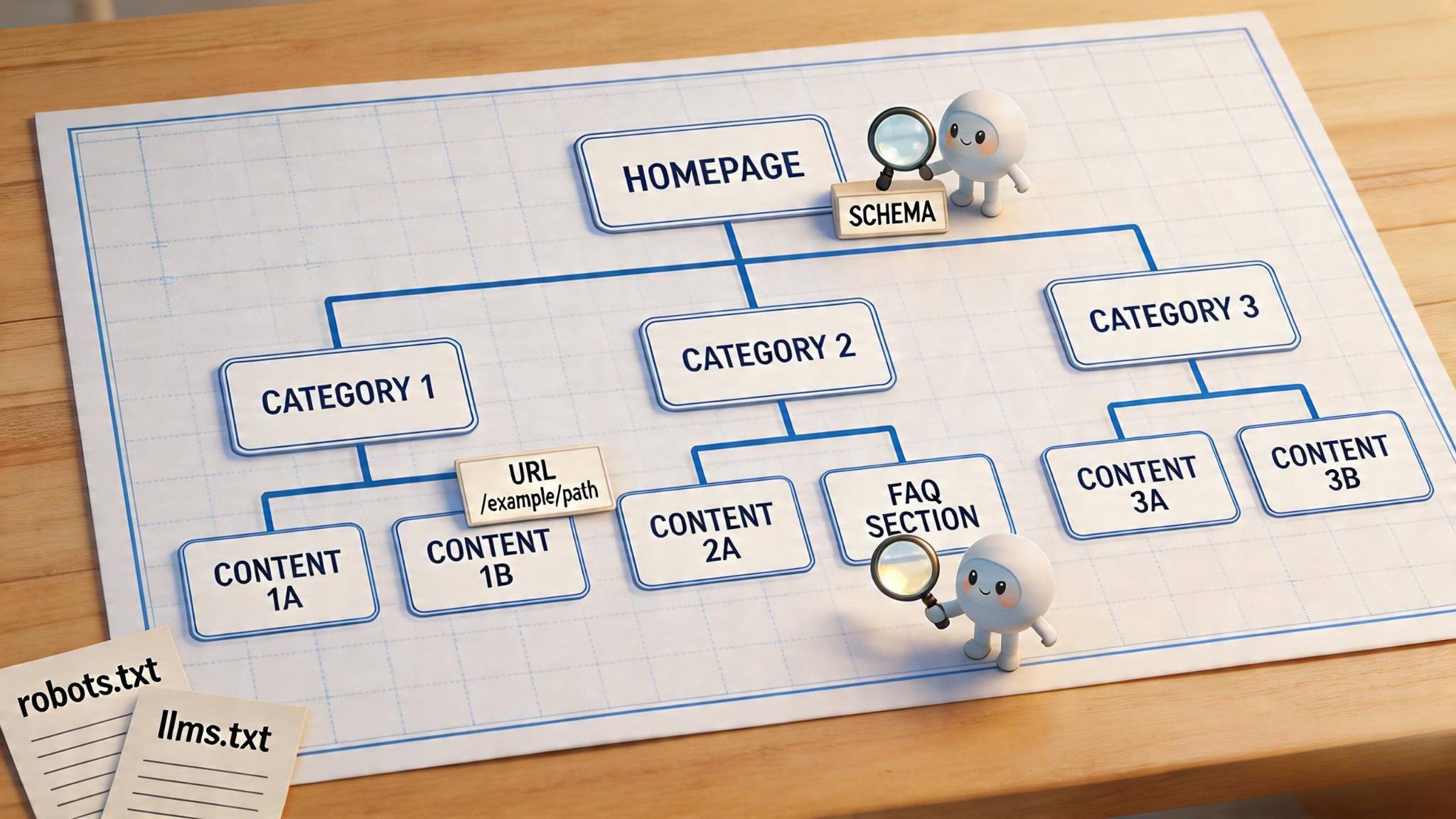

Structured Content and Schema Markup Advantages

Content with comprehensive structured data receives 28% more citations than identical content without schema markup [24]. Pages with schema implementation show 36-47% higher citation rates [27]. JSON-LD schema markup increases citation probability by 340% compared to unstructured content [28]. FAQPage schema demonstrates the highest citation probability among all schema types [27]. Content updated within 30 days receives 3.2× more AI citations, with 76.4% of Perplexity's top-cited pages updated in that timeframe [2].

Conclusion

AI search platforms follow systematic, measurable patterns when selecting sources rather than random selection processes. ChatGPT's reliance on Wikipedia, Google's balanced approach, and Perplexity's community-driven preferences create distinct optimization pathways for each platform. Citation concentration metrics reveal winner-take-all economics, with top sources capturing disproportionate visibility.

Content creators seeking AI visibility should prioritize statistical specificity, cross-source consistency, and structured data implementation. All things considered, the 30-40% visibility boost from numerical data and the 340% citation probability increase from schema markup represent actionable opportunities. Understanding these platform-specific citation mechanics transforms AI optimization from guesswork into strategic, evidence-based methodology.

References

[1] - https://www.getmentioned.co/blog/blog-ai-visibility-gap-report

[2] - https://www.linkedin.com/pulse/domain-authority-vs-ai-citation-what-data-says-adrien-thomas-l0w3c

[3] - https://authoritytech.io/blog/chatgpt-vs-perplexity-vs-google-ai-overviews-b2b-pipeline-2026

[4] - https://thedigitalbloom.com/learn/2025-ai-citation-llm-visibility-report/

[5] - https://www.emergentmind.com/topics/ai-answer-engine-citation-behavior

[6] - https://cybernews.com/news/chatgpt-wikipedia-google-reddit/

[9] - https://www.tryprofound.com/blog/ai-platform-citation-patterns

[11] - https://searchengineland.com/chatgpt-retrieves-more-pages-than-it-cites-study-472349

[12] - https://www.azoma.ai/insights/the-sources-chatgpt-cites-the-most-per-query-type

[13] - https://www.seroundtable.com/chatgpt-google-aio-sources-39578.html

[14] - https://ziptie.dev/blog/google-ai-overviews-source-selection/

[15] - https://doctorrank.com/perplexity-ai-for-healthcare

[16] - https://ziptie.dev/blog/what-determines-which-sites-perplexity-cites-first/

[17] - https://www.qwairy.co/blog/provider-citation-behavior-q3-2025

[19] - https://arxiv.org/html/2507.05301v1

[20] - https://www.growth-memo.com/p/the-science-of-how-ai-picks-its-sources

[22] - https://koanthic.com/en/statistics-boost-ai-citations-complete-2026-guide/

[24] - https://beamtrace.com/blog/ai-citation-optimization

[25] - https://ziptie.dev/blog/eeat-for-ai-search/

[26] - https://hashmeta.com/ai-search-optimization-guide/the-e-e-a-t-framework-for-ai-search/

[27] - https://wpriders.com/schema-markup-for-ai-search-types-that-get-you-cited/

[28] - https://www.growth-rocket.com/blog/structured-data-for-ai-beyond-schema-markup/